Blogpost

Why we need a hub for software in science

First let’s take a step back and think about the definition of science: The intellectual and practical activity encompassing the systematic study of the structure and behaviour of the physical and natural world through observation and experiment...

First let’s take a step back and think about the definition of science:

The intellectual and practical activity encompassing the systematic study of the structure and behaviour of the physical and natural world through observation and experiment.

Now, when we think about what kind of experimentation goes on in science, we mostly think of this kind of sciency (Dear Mr. Spellchecker, that’s a real word) stuff [1]:

In fact, that’s the first result on Google Images, if you search for "science". And if you look at other results, they’re all very similar. Some Erlenmeyer flasks containing fluorescent liquids, some atoms, crazy scientists with weird hairdos or tentacles for arms here and there, and some microscopes, and that’s that. Is this really what science is? Pouring liquids from one glass container into another and heating them with fire? It may have looked like that in the past (hundreds of years ago), but modern science is different. Different how? Well, it’s almost entirely based around computers and software.

Looking at recent issues of Science, roughly half of the papers are software-intensive projects [2], and even those that aren’t, you can be sure a spreadsheet or three were involved at some point (spreadsheets are code too). So where are the images of people doing science by staring intensely at Excel? Doing science by writing their familiar command line incantations to process raw data? And doing science by editing C code in Emacs 10 years ago, to ensure the New Horizons spacecraft would find its way to Pluto?

It seems to me we have to recognize that software is a large part of science, much larger in fact compared to Erlenmeyer flasks, and that software development can be proper science, much like heating liquids in a cup.

Recognition

In business, ever since the rise of Silicon Valley and the realization about what a competitive advantage good software can be, it’s become commonly accepted that software developers are people too. Being a developer is now considered one of the best professions available and if you also happen to be good at it, I think it’s fair to say that the cards are currently dealt in your favor.

On the other side of the fence though, in academia, the same revolution has not happened yet. It’s common for researchers to outsource software development on short-term contracts, to software churning black boxes: specification in, software out, as cheaply as possible. Outsourced to anonymous authors that get no credit in papers that rely on their software. It’s even more common for researchers to do their own ad-hoc development, to have to split time between writing code and “doing research” [3], as if software is not a research output by itself.

This climate in academia has several effects and I’ll be as bold as to claim that not one of them is positive. Software quality suffers as there’s almost never time for otherwise recognized good practices, such as automated testing and documentation. Quality suffers additionally because researchers who write software are often not formally trained nor do they have the time to invest in learning more about the art of programming, because writing software is not considered “real work”, so it’s done as quickly as possible. After such software is written and the paper using it is published, there is almost never any time (or motivation) for its maintenance, and unless there exists that rare second paper which reuses it, it falls into disuse and disrepair, its source code buried on an old laptop in some PhD student’s attic.

By building a hub for research software, where we would categorize it and aggregate metrics about its use and reuse, we would be able to shine a spotlight on its developers, show the extent, importance and impact of their work, and by doing so try to catalyze a change in the way they are treated. It won’t happen overnight, and it perhaps won’t happen directly, but for example, if you’re a department head and a visit to our hub confirms that one of your researchers is in fact a leading expert for novel sequence alignment software, while you know her other “actual research” papers are not getting traction, perhaps you will allow her to focus on software. Given enough situations where split-time researchers/software developers are discovered to be particularly impactful in code, it might establish a new class of scientists, scientists dedicated to software development.

But in order for that to even have a chance of happening, we need to, first, recognize software as a primary output of science, and second, fit it snuggly within the highly complex and well-oiled social machine that is academia. And we all know what the oil is that lubricates the cogs of academia. It’s credit.

Credit

As unfortunate as it may seem, very little in academia happens by itself. There exists an intricate reward system that fuels all of science, and while the academic reward system is often heavily criticized for its detrimental side-effects (e.g. publish or perish), it has to be doing a lot of things right to be able to bring us discoveries such as general relativity or the Higgs boson. So yes, to misquote someone famous, it’s absolutely the worst system, but it’s also better than all the rest. And we need to be able to connect research software to it.

Similar to how Figshare has enabled researchers to get credit for outputs like datasets and posters (and this credit has already been used in successful tenure applications), our hub would allow researchers to get credit for software, not only by providing a central location where it can be stored and shared, but also by collecting and displaying information about how and where it is used and reused.

Due to the way software is uniquely structured compared to other research outputs, specifically because of the way how dependencies are explicit by necessity, the credit enigma could be solved in interesting ways, such as crawling the dependency tree to assign transitive credit [4], i.e. getting credit not only when your software is cited, but also a fraction of it when your software is used as a dependency. And down, down the rabbit hole.

OK, so at this point we have a scientist software developer who is not only recognized within her department and among her peers, but also systematically rewarded for her work on software. In other words, she now has a supportive environment which enables her to produce good science by producing good software. I think she’s happy with that.

But what about her software? Is the software happy? Now I don’t have a randomized double-blind study to back me here, but it feels to me that software is happy when it gets reused — and it can only be reused if it’s found.

Discovery

Research software is often incredibly specific, and trying to Google for it is more often than not, an exercise in futility. Imagine, for example, that the problem you’re dealing with is DNA sequence data with worsening quality reads at each end of the sequence. Let’s try and Google that: “worsening quality reads each end dna” — nothing useful, let’s make it a bit more specific with some keywords: “worsening quality reads sequence 5’ 3’ software dna” — there’s a number of ads, but nothing looks very promising, and so you Google forth... this “keyword tango” could go on for so long that you might decide it’s a better use of your time to just write some code on your own. And, no offense, but that’s just about the worst thing that could happen.

If, on the other hand, you could do a quick search on our hub, where the entire web of results is helpfully narrowed down to only research software, and software is already neatly organized into categories, you might instead find “sickle”, a very well-cited software, which deals specifically with:

Sliding windows along with quality and length thresholds to determine when quality is sufficiently low to trim the 3’-end of reads and also determines when the quality is sufficiently high enough to trim the 5’-end of reads.

Aha! So that’s the magic keyword combo!

Finding this software and deciding to reuse it (and perhaps also fix any bugs you encounter) is a very economical choice, especially compared to unknowingly duplicating this work to write your own solution. Not only is it economical, it’s also very much in the spirit of science — standing on the shoulders of giants instead of building your own flimsy castle.

Current state of affairs

You know what the absolutely coolest part of all of this is? It’s that as of a few days ago, such a hub does actually exist! Enter Depsy. Made by the same folks that gave us ImpactStory, and funded by the NSF, Depsy focuses on software, e.g. Pyomo, and people writing that softare too, e.g. R superstar Hadley Wickham. It currently only handles Python and R code, probably the among the most popular programming languages in research, but I’m sure it will quickly grow to handle other languages as well. It's still under heavy development, judging by its GitHub repository, so do be patient, but also try to contribute if you can!

There is one other central hub like it, and that’s my own hackathon project from a while back, ScienceToolbox (GitHub), but I have to very reluctantly admit that it’s currently unmaintained, as there has never been enough spare steam to keep it going after its hackday debut. This also brings up the crucial question of how these hubs can be made sustainable, but let’s leave the topic of sustainable open science infrastructure for another day.

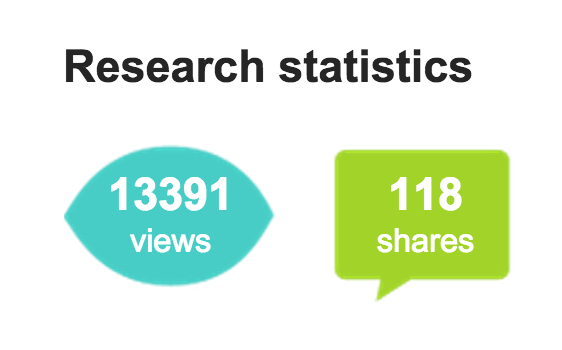

A hub like this is necessarily made up from a number of puzzle pieces, and in terms of collecting metrics about research software, Martin Fenner has experimentally used his open source metrics software (Lagotto) and ScienceToolbox’s list to try and figure out what useful data lies out there.

There is also a great curated software collection for the field of astrophysics, but after this, there isn’t much else out there, and I’m more than happy to be corrected if there is (and will gladly update this section with more information)!

Update Oct 20: Martin Fenner shared a link to the The UK Community of Research Software Engineers, which aims to address the exact problems we discussed above, i.e. lack of recognition and rewards for research software developers.

Conclusion

In a way, the existence of a hub for software in science is held back by the same issues that plague research software itself: the need for it is not really recognized with funders, and the path from effort (building it) to a reward (seeing it effect a positive change) is very winding indeed. I do hope, if nothing else, that I’ve at least slightly moved some needles in the right direction with this post, and that you’ll agree with me when I say that it’s high time to enable research software developers to get the recognition and respect they deserve.

Feel free to discuss this post in HN comments: https://news.ycombinator.com/item?id=10418147.

References:

- https://commons.wikimedia.org/wiki/File:Science-symbol-2.svg

- Daniel S. Katz. Software Program and Activities. http://casc.org/meetings/14sep/Dan%20Katz_Software_CASC.pdf

- Derek Groen, et al. Software development practices in academia: a case study comparison. http://arxiv.org/abs/1506.05272

- Daniel S. Katz, Arfon M. Smith. Implementing Transitive Credit with JSON-LD. http://arxiv.org/abs/1407.5117

- Last photo is by Graham Turner for the Guardian. Appeared in this piece.

Support

Enjoyed this post?

If you'd like to support my writing and experiments, you can do so on Ko-fi.